Post by : Anis Karim

Biometric data — such as facial scans, fingerprints, iris patterns, voiceprints, and behavioral signatures — was once reserved for high-security environments. Today, it's embedded in everyday life. Phones unlock with our faces, apps verify our identities using voice or selfies, smart home systems recognise gestures, and countless platforms encourage biometric verification for convenience.

But as more industries adopt biometrics, the questions around storage, consent, data portability, deletion rights, and potential misuse have intensified. Recent debates highlight how biometric information is far more sensitive than regular personal data. Unlike a password, you cannot “reset” your face or fingerprint if it leaks. And with AI-powered systems capable of mimicking, generating, or spoofing biometric traits, the risks have grown even more complicated.

This makes biometric privacy not just a technical issue — but a personal safety issue. Users must now take proactive steps to understand how their biometrics are collected, where they are stored, and how to minimise unnecessary exposure.

Most users think biometrics refer to fingerprints and facial recognition alone, but modern systems capture more than that. Biometric identifiers now include:

facial geometry and micro-expressions

fingerprints and palm-prints

retina and iris patterns

voice patterns and tone signatures

gait patterns and posture

typing rhythm and touchscreen behaviour

vein patterns

ear shape

heart-rate signatures from wearables

With technology detecting even the subtler forms of identity, privacy protection must become equally detailed.

Biometric data is extremely sensitive for several reasons:

Once compromised, it cannot be changed the way passwords can.

Biometric identifiers can tie your identity to actions, locations, and behaviours indefinitely.

Cameras, microphones, and sensors can capture biometric traits passively.

Deepfake technology, synthetic voice systems, and AI-generated facial models raise risk.

Biometric tracking can occur across devices, platforms, borders, and systems.

Users must treat biometric privacy with the same seriousness as financial or medical data — perhaps even more.

Below is a comprehensive, practical, and user-ready set of actions to safeguard biometric privacy in daily digital life.

Most biometric risks stem from oversharing. Users often provide fingerprints, facial scans, or voice samples to platforms unnecessarily.

To reduce exposure:

Avoid enabling facial recognition in apps that don’t genuinely require it.

Choose password or PIN verification where possible.

Decline biometric login prompts in unnecessary websites.

Avoid uploading face videos or voice samples to identity-verification apps unless essential.

Question whether a platform truly needs your biometric identity or is merely collecting it for convenience.

Opt for the least intrusive option every time.

Phones, laptops, and wearables default to biometric recognition because it increases convenience. But you don’t need it everywhere.

Recommended settings adjustments:

Disable “face unlock” and replace it with a strong passcode.

Turn off fingerprint unlock on devices you rarely carry outside.

Disable voice-assistant wake words that constantly analyse speech.

Turn off attention tracking, eye-tracking, or emotion-analysis features.

Restrict gesture-based recognition from cameras when not needed.

These steps limit how much of your body your device continuously analyses.

Apps often request camera, microphone, or sensor access for reasons unrelated to their actual function.

Review permissions every month:

Remove camera access for apps that don’t need it.

Revoke microphone access from apps unused for calling.

Restrict background activity for apps using sensors aggressively.

Disable permission for apps that track movement or behaviour unnecessarily.

Many platforms collect biometric-adjacent data silently, so permissions must be monitored.

Popular filters are harmless fun — but they rely heavily on facial mapping. These apps gather:

detailed face geometry

contours of expression

iris movement

smile patterns

eyebrow and jawline recognition

This data can be used to train algorithms far beyond entertainment. Limit filter-heavy apps, and avoid programs that require multiple angles of your face to produce 3D scans.

Voice biometrics are increasingly used for banking, insurance, and customer support. But voice cloning software can replicate voices using very small samples.

Protect yourself by:

avoiding voice verification when alternatives exist

using numeric codes instead of “say your name to continue” prompts

refusing voiceprint enrolment with service providers

The fewer voice samples stored in databases, the safer you are.

Some platforms allow users to request deletion of biometric identifiers.

You should:

delete unused face and fingerprint data from outdated apps

request removal from identity-verification services you no longer use

reset biometric profiles on devices before selling or giving them away

check online accounts for hidden biometric enrolments

Many companies keep biometric identifiers long after they are no longer needed — unless users explicitly remove them.

Using biometrics often becomes a fallback for weak passwords. To avoid relying on your face or fingerprints:

create unique multi-word passphrases

enable multi-factor authentication

use authenticator apps instead of SMS

rotate passwords for critical accounts each year

avoid storing passwords in browsers

The stronger your traditional security, the less frequently you’ll be pushed toward biometric login systems.

Wearables capture extremely sensitive data such as heartbeat patterns, gait signatures, stress patterns, blood oxygen, and even micro-movements.

Do this to protect your wearable data:

disable continuous heart-rate scanning

turn off gesture or posture-based unlock features

delete old workout logs

avoid syncing data with unnecessary third-party apps

restrict Bluetooth connectivity when outside

Wearables are gateways to behavioural biometrics that many users overlook.

Modern spaces — airports, malls, offices, and even public streets — use cameras with facial recognition or crowd analytics.

To minimise exposure:

avoid looking directly into commercial security cameras when possible

wear sunglasses or hats in high-surveillance zones

disable Bluetooth and Wi-Fi scanning when walking through busy areas

avoid participating in public face-scanning kiosks or “smart queue” systems

Even without explicit consent, public surveillance can extract biometric patterns.

Photos and videos containing detailed facial features or unique physical traits should never be shared lightly.

Avoid:

sending selfies to unknown contacts

uploading high-resolution face images to unsecured platforms

scanning your face in apps with opaque policies

backing up facial images to cloud platforms with weak encryption

Images that look harmless to you may be used to reconstruct biometric profiles.

Children are increasingly exposed to biometric risks. Parents should:

avoid enrolling children in biometric ID systems

disable face-unlock features on kids’ devices

restrict children’s access to filters requiring facial scans

prevent voice assistants from building child voice profiles

limit which smart toys and ed-tech devices capture data

Children’s biometric information must be protected early, as they cannot consent or understand long-term consequences.

Some devices allow users to decide whether to store biometrics locally or in cloud-based systems.

Best practice:

always choose on-device storage for fingerprints or face data

never opt into cloud-syncing for biometric keys

avoid services that require uploading biometric scans for storage

choose platforms that process biometric data on the device itself

The safest biometric is the one that never leaves your device.

Before giving a company your biometric identity, ask:

Where is my biometric data stored?

Is it encrypted on-device or uploaded to servers?

Can I delete it anytime?

Do you use my biometric data to train machine-learning models?

Who has access internally?

How long do you retain it?

Companies must be transparent, and users must be assertive.

Even patterns like scrolling behaviour, typing rhythm, and interaction speed can count as behavioural biometrics. Limit exposure by:

disabling behavioural tracking in apps

avoiding websites with aggressive tracking cookies

clearing browser fingerprints regularly

using privacy-focused browsers

limiting third-party analytics on mobile apps

Behavioural biometrics are harder to identify but equally crucial to protect.

Many AI-based apps can generate avatars, modify voices, or create 3D facial models. These tools often require extremely detailed face or voice samples.

Avoid:

uploading full 360° facial videos

providing long voice recordings

participating in “create your digital twin” services

allowing apps to generate personalised voice assistants using your voice

AI reconstruction is one of the biggest emerging privacy threats.

The debate around biometric data has revealed a deep truth: biometrics are a part of our core identity. Their misuse can impact safety, finances, digital identity, travel, and even personal reputation.

Users must adopt a mindset that treats biometric traits the same way one treats a passport, bank PIN, or medical record. They must remain cautious, informed, and selective.

The convenience biometrics offer does not outweigh the long-term risk of uncontrolled data exposure. Awareness and proactive action are now mandatory personal responsibilities.

As biometric systems become integrated into daily life, users must take control of their digital identity. Protecting biometric data is not a matter of paranoia—it's a matter of safeguarding irreplaceable personal traits in a world where technology can replicate, misuse, or exploit them. By minimising biometric sharing, controlling permissions, reducing digital exposure, securing wearables, and questioning platforms with assertiveness, every user can take meaningful steps toward protecting their privacy.

Biometric privacy is no longer a future concern — it is a present necessity.

This article provides general guidance on biometric privacy. Users with specific security needs should consult cybersecurity professionals for personalised recommendations.

US Stocks Slide as AI Fears, Inflation and Oil Surge Weigh

US stocks dropped as AI disruption fears hit tech firms, inflation rose above forecasts, and oil pri

Pacific Prime Wins Top Honors at Cigna Awards 2026

Pacific Prime secured Top Individual Broker and Top SME Broker awards at Cigna’s Annual Broker Award

QatarEnergy Halts LNG Output After Military Attack

QatarEnergy has stopped LNG production after military attacks hit its facilities in Ras Laffan and M

Strong 6.1 Magnitude Earthquake Hits West Sumatra, No Damage

A 6.1 earthquake struck off West Sumatra, Indonesia. No casualties, damage, or tsunami alert reporte

Saudi Confirms Drone Strike on US Embassy Riyadh

Two drones hit the US Embassy in Riyadh, causing a small fire and minor damage. No injuries were rep

UAE Restarts Limited Flights as Regional Airspace Disruptions Continue

UAE restarts limited flights from Dubai as US-Israel attacks on Iran disrupt regional airspace, forc

Asia Faces Energy Shock After Iran Closes Strait

Iran shuts Strait of Hormuz amid US-Israel strikes, sending oil prices higher and raising serious en

Bank of Baroda Faces Abu Dhabi Legal Battle over NMC Collapse

Bank of Baroda’s involvement in Abu Dhabi litigation tied to the NMC Healthcare collapse raises repu

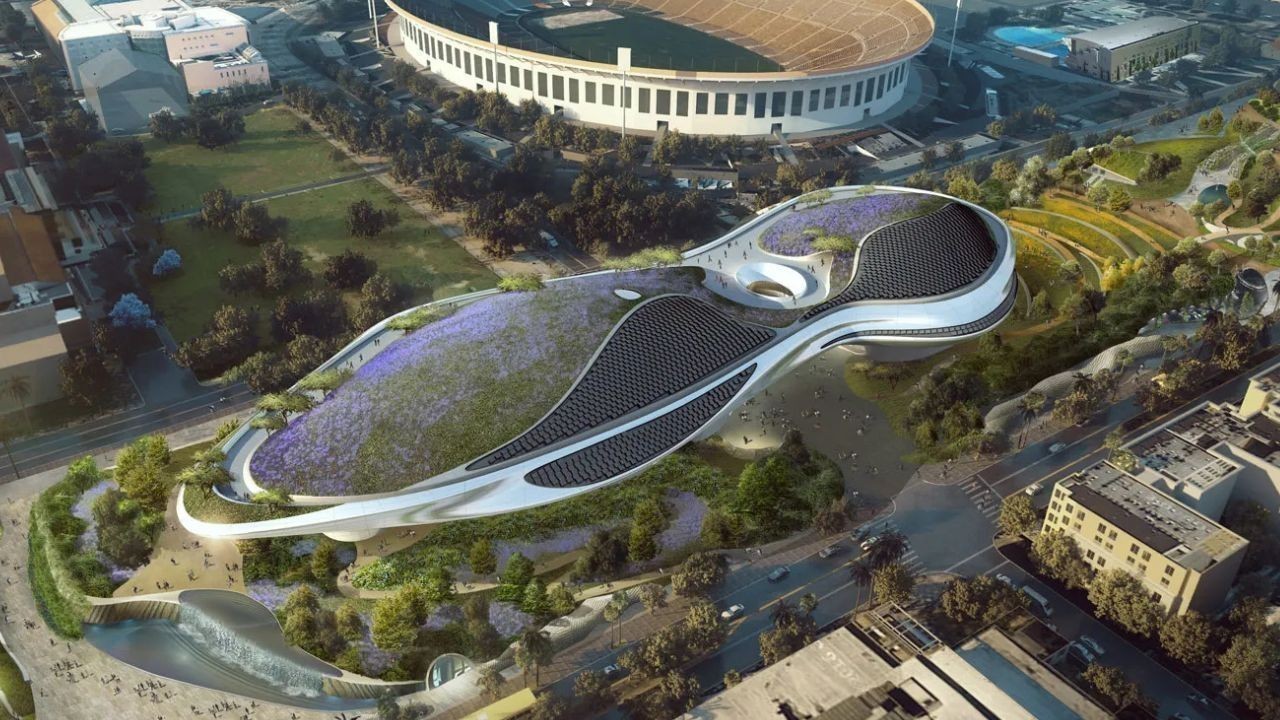

Top Museum Openings of 2026 Set to Transform Global Tourism

From Los Angeles to Abu Dhabi and Brussels, 2026 brings major museum launches—Lucas Museum, Guggenhe

UAE Tour Highlights UAE’s Strength in Hosting Global Sports Events

Abu Dhabi Sports Council says the successful UAE Tour reflects the UAE’s leading role in hosting maj

EU Seeks Clarity from US After Supreme Court IEEPA Ruling

European Commission urges full transparency from the US on steps after Supreme Court ruling, emphasi

SpaceX Launches 53 New Satellites for Expanding Starlink Network

SpaceX launches 53 Starlink satellites in two Falcon 9 missions, breaking reuse records and expandin

RTA Awards Contract for Phase II of Hessa Street Upgrade in Dubai

Phase II of Hessa Street Development to add bridges, tunnel, and upgraded intersections, doubling ca

UAE Gold Prices Today, Monday 16 February 2026: Dubai & Abu Dhabi Updated Rates

Gold prices in UAE on 16 Feb 2026 updated: 24K around AED 599.75/gm, 22K AED 555.25/gm, and 18K AED

Over 25 Ahmedabad Schools Receive Bomb Threat Email, Authorities Investigate

More than 25 schools in Ahmedabad evacuated after bomb threat emails mentioning Khalistan. Authoriti